|

Ruta Desai I am a Staff Research Scientist in Fundamental AI Research (FAIR) at Meta. At FAIR, I am exploring reasoning and long-horizon planning problems towards agents that can partner with humans. Prior to that, I was a Tech Lead Manager at Meta Reality Labs Research. At Reality Labs, I led an embodied AI team focused on solving perception and planning problems for Augmented Reality (AR) systems that can extend their users' capabilities. I am broadly interested in perception, planning, and reasoning problems for Human-AI interaction.

I obtained my PhD at the Robotics Institute, Carnegie Mellon University in 2018 working with Stelian Coros and Jim McCann. For my thesis, I devised human-AI systems that enabled casual users to build and program robots — towards increasing accessibility of robotics. In the past, I have also had not-so-brief stints in the area of human motor control and biomechanical simulations with Hartmut Geyer and Jessica K. Hodgins. |

Email: ruta.p.desai[at]gmail.com |

PublicationsDigital and Physical Agents |

|

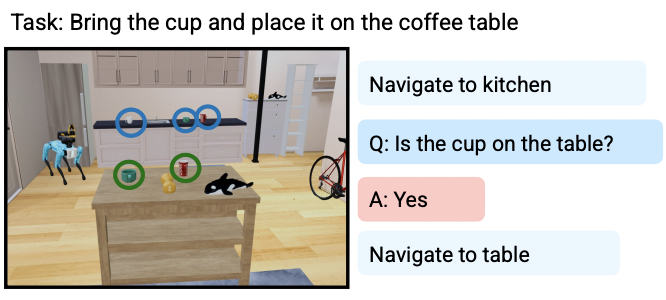

Ask-to-Act: Grounding Multimodal LLMs to Embodied Agents that Ask for Help with Reinforcement Learning

|

|

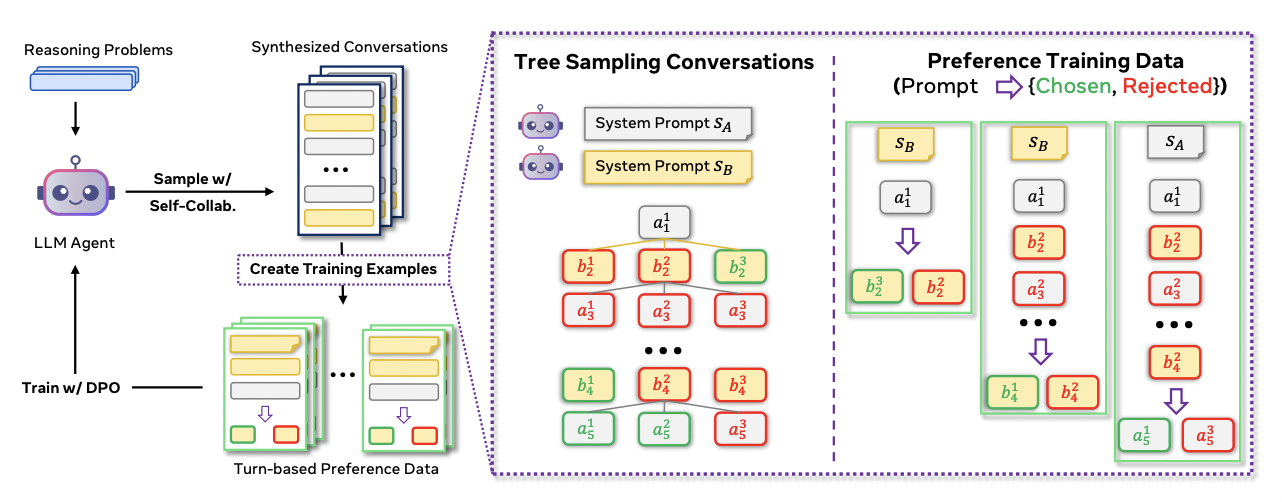

Collaborative Reasoner: Self-improving Social Agents with Synthetic Conversations

|

|

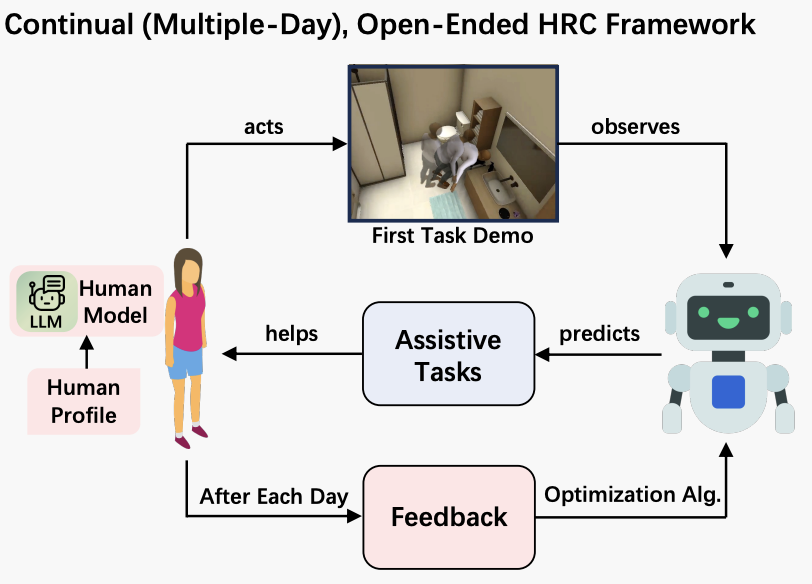

COOPERA: Continual Open-Ended Human-Robot Assistance

|

|

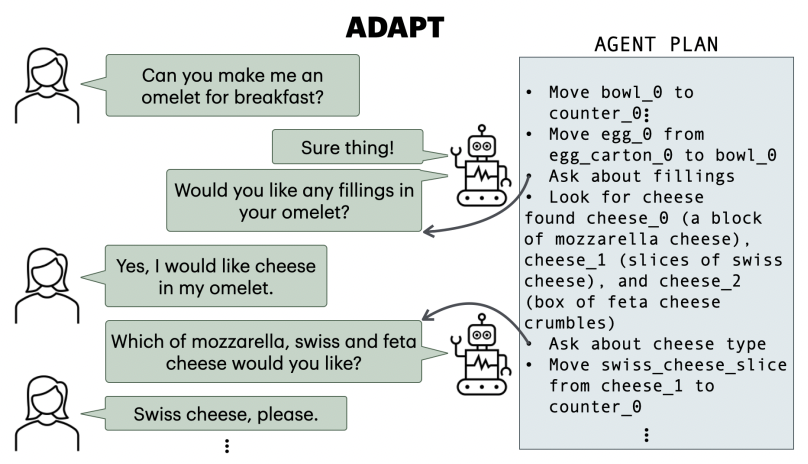

ADAPT: Actively Discovering and Adapting to Preferences for any Task

|

|

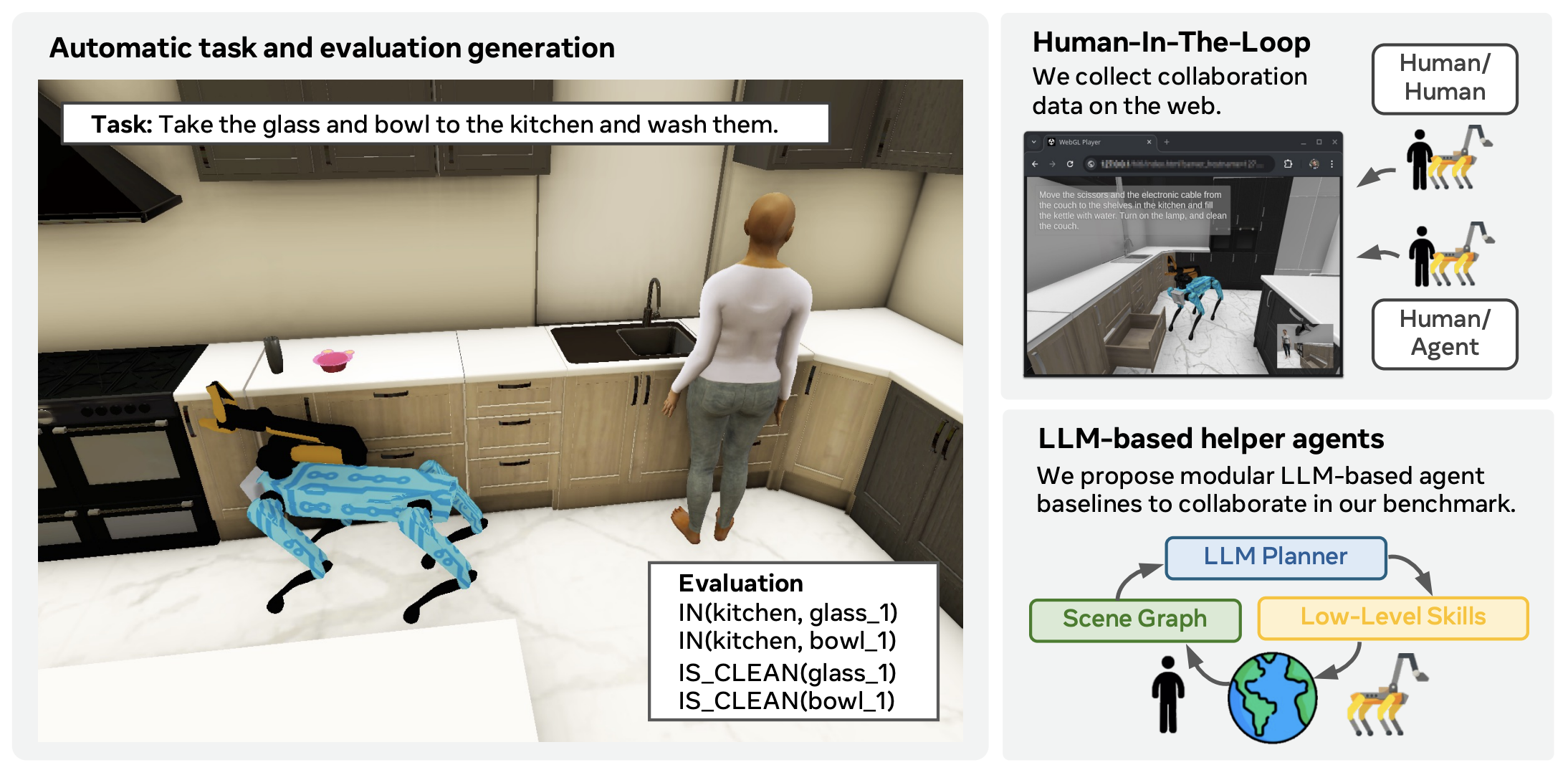

PARTNR: A Benchmark for Planning and Reasoning in Embodied Multi-agent Tasks

|

|

Habitat 3.0: A Co-Habitat for Humans, Avatars and Robots

|

|

Adaptive Coordination in Social Embodied Rearrangement

|

|

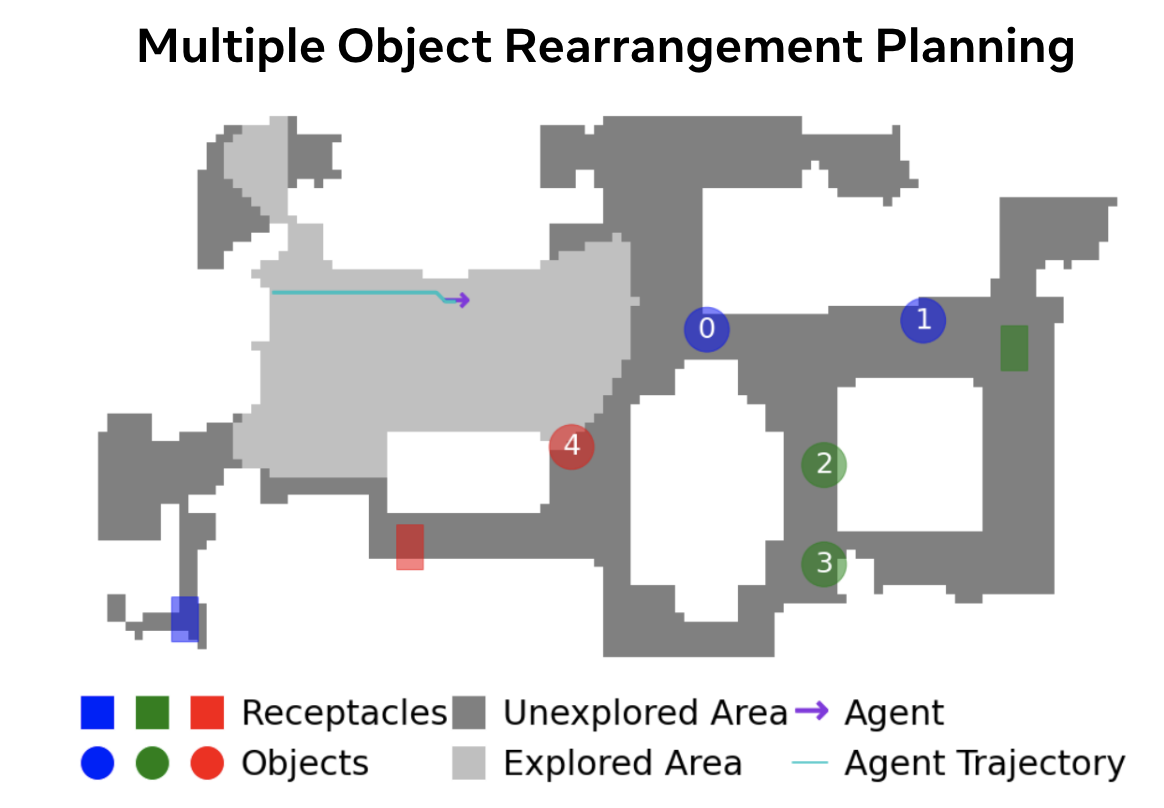

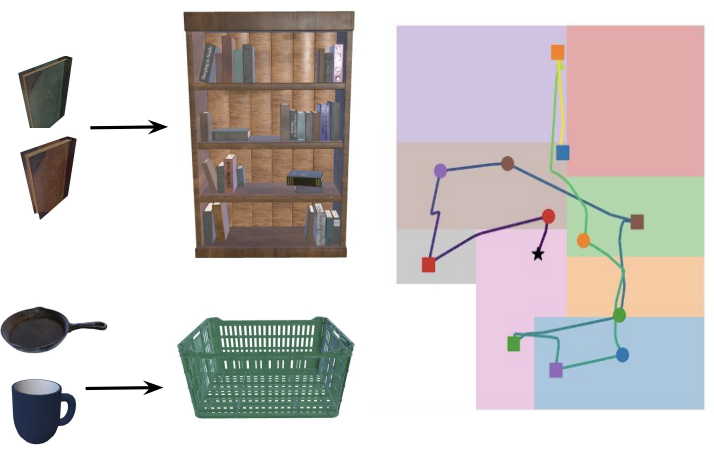

Effective Baselines for Multiple Object Rearrangement Planning in Partially Observable Mapped Environments

|

|

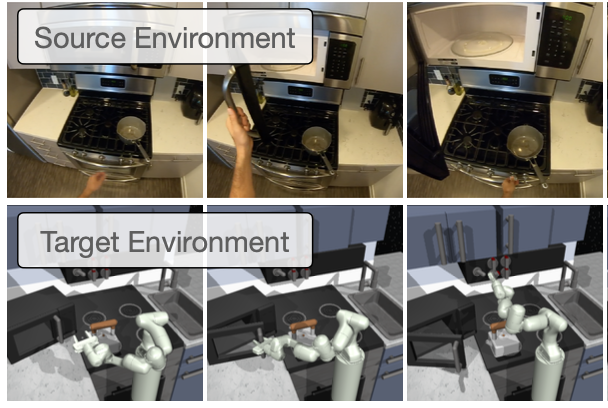

Cross-Domain Imitation Learning via Semantic Skills

|

Vision and Language |

|

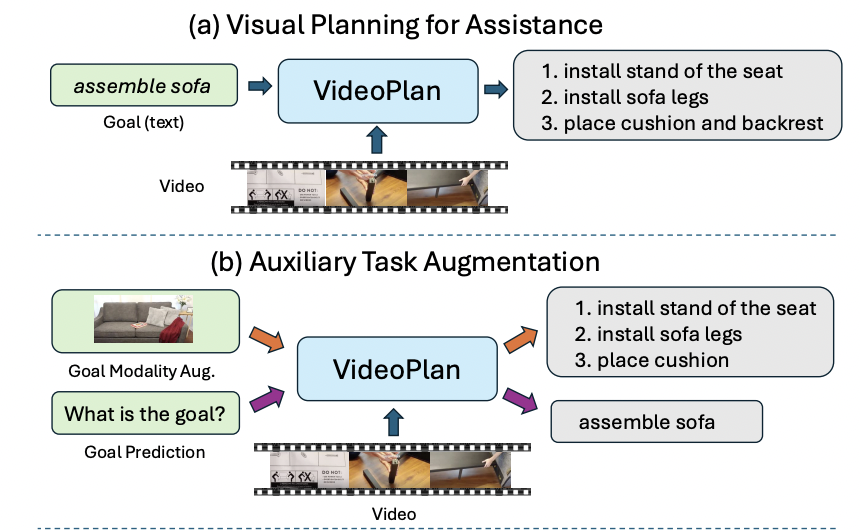

Enhancing Visual Planning with Auxiliary Tasks and Multi-token Prediction

|

|

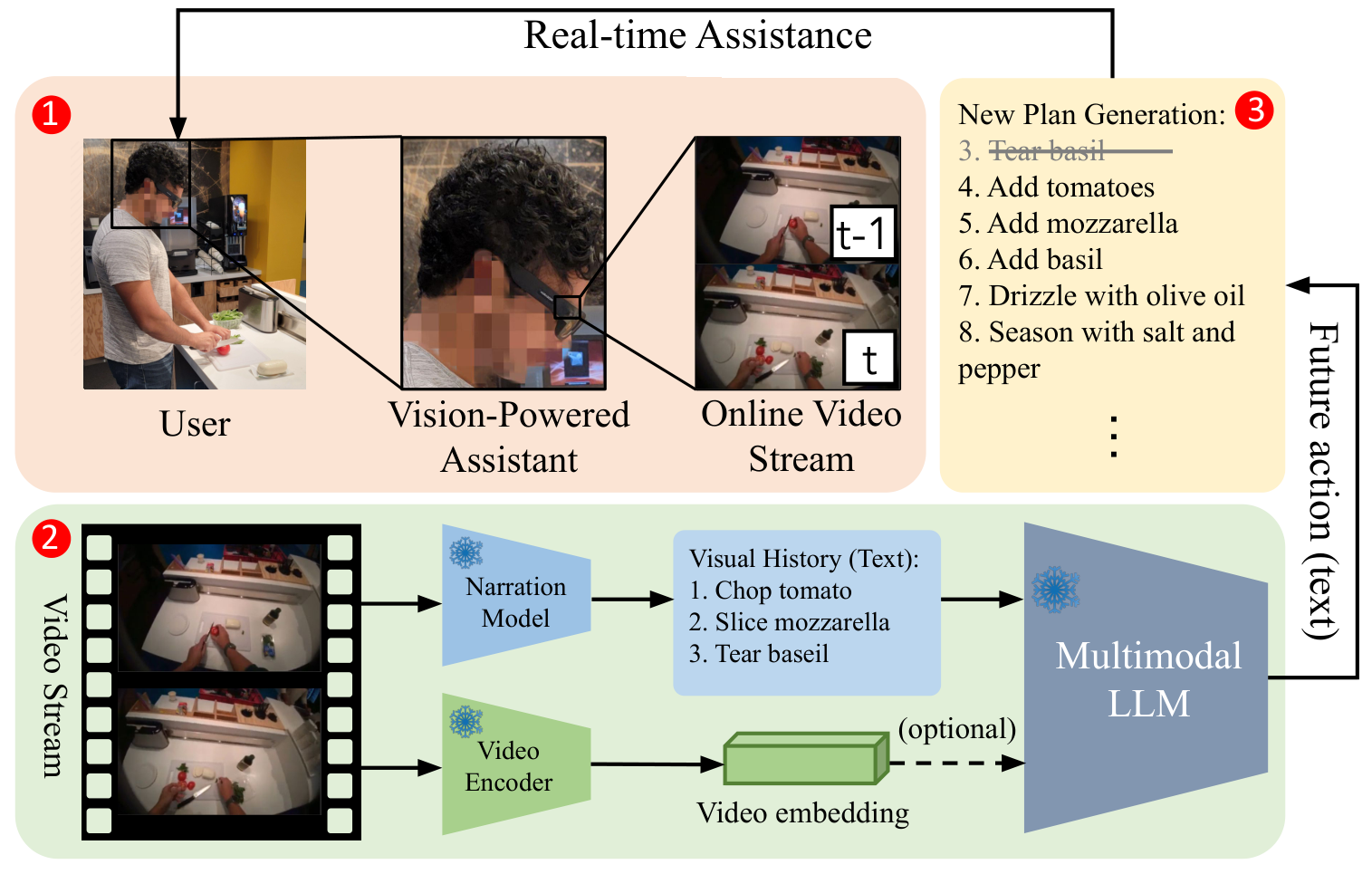

User-in-the-loop Evaluation of Multimodal LLMs for Activity Assistance

|

|

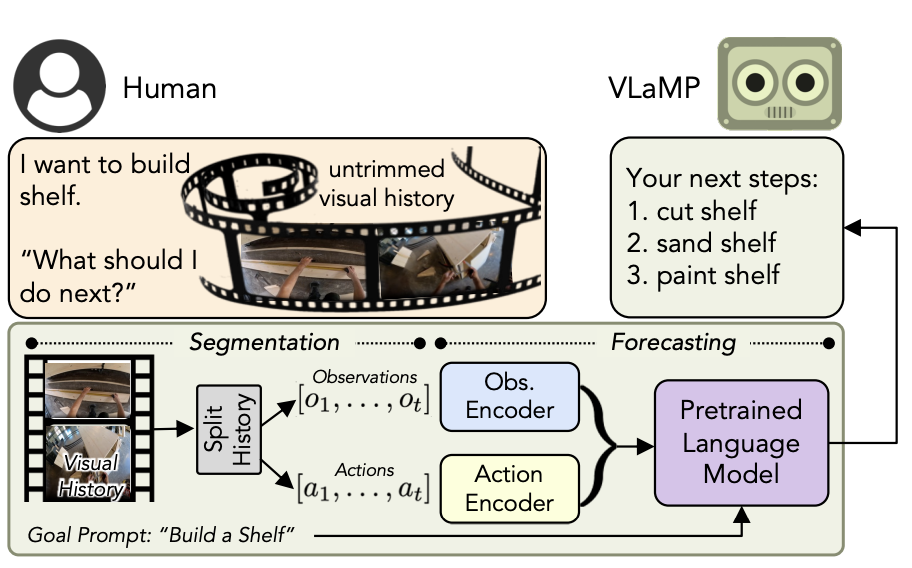

Pretrained Language Models as Visual Planners for Human Assistance

|

|

EgoTV: Egocentric Task Verification from Natural Language Task Descriptions

|

|

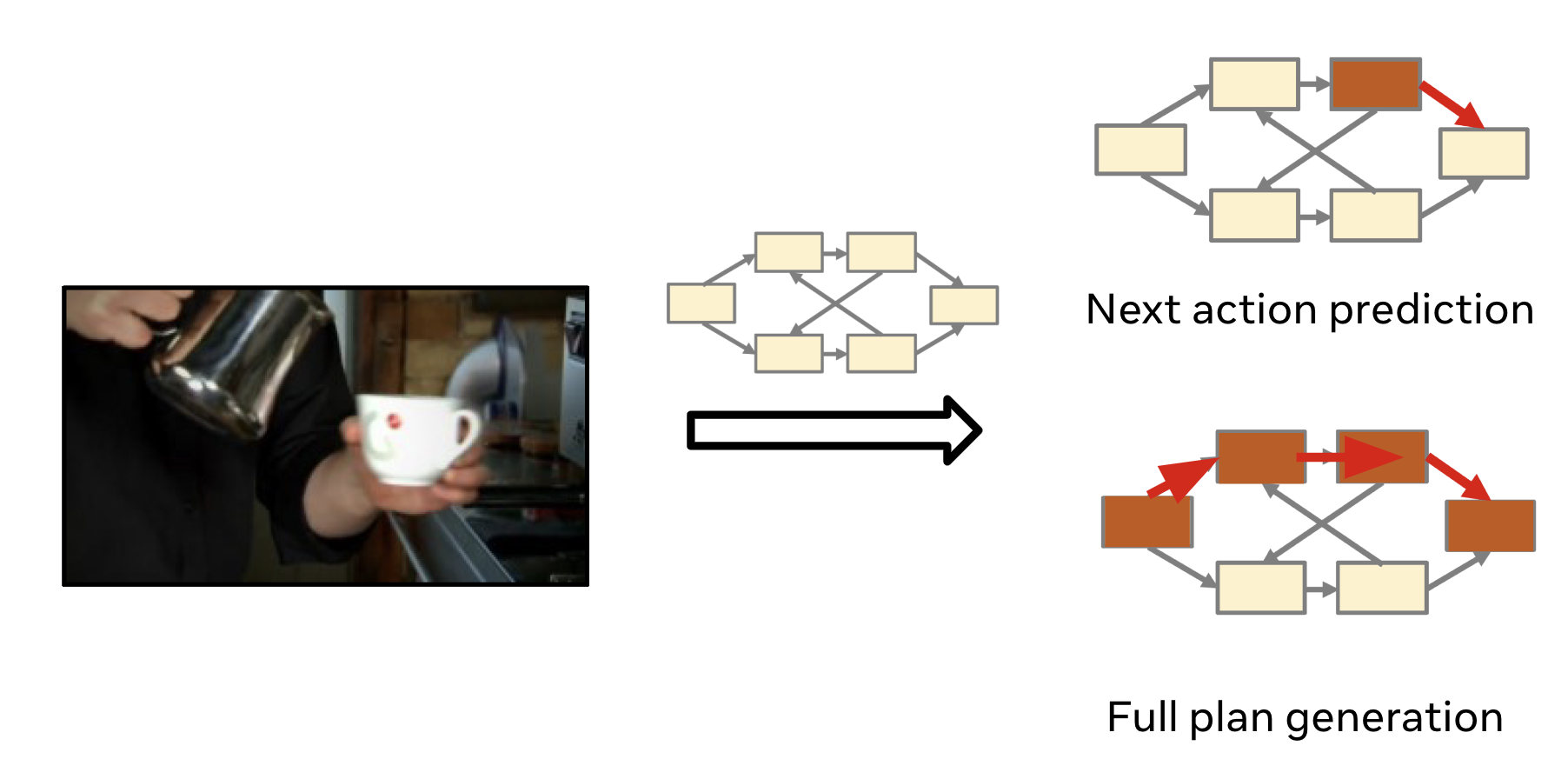

Action Dynamics Task Graphs for Learning Plannable Representations of Procedural Tasks

|

|

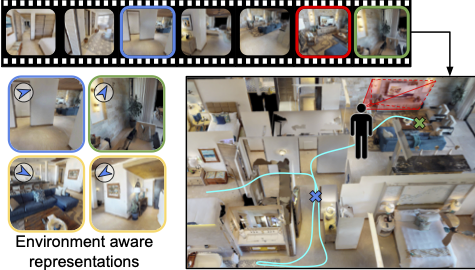

Egocentric Scene Context for Human-centric Environment Understanding from Video

|

|

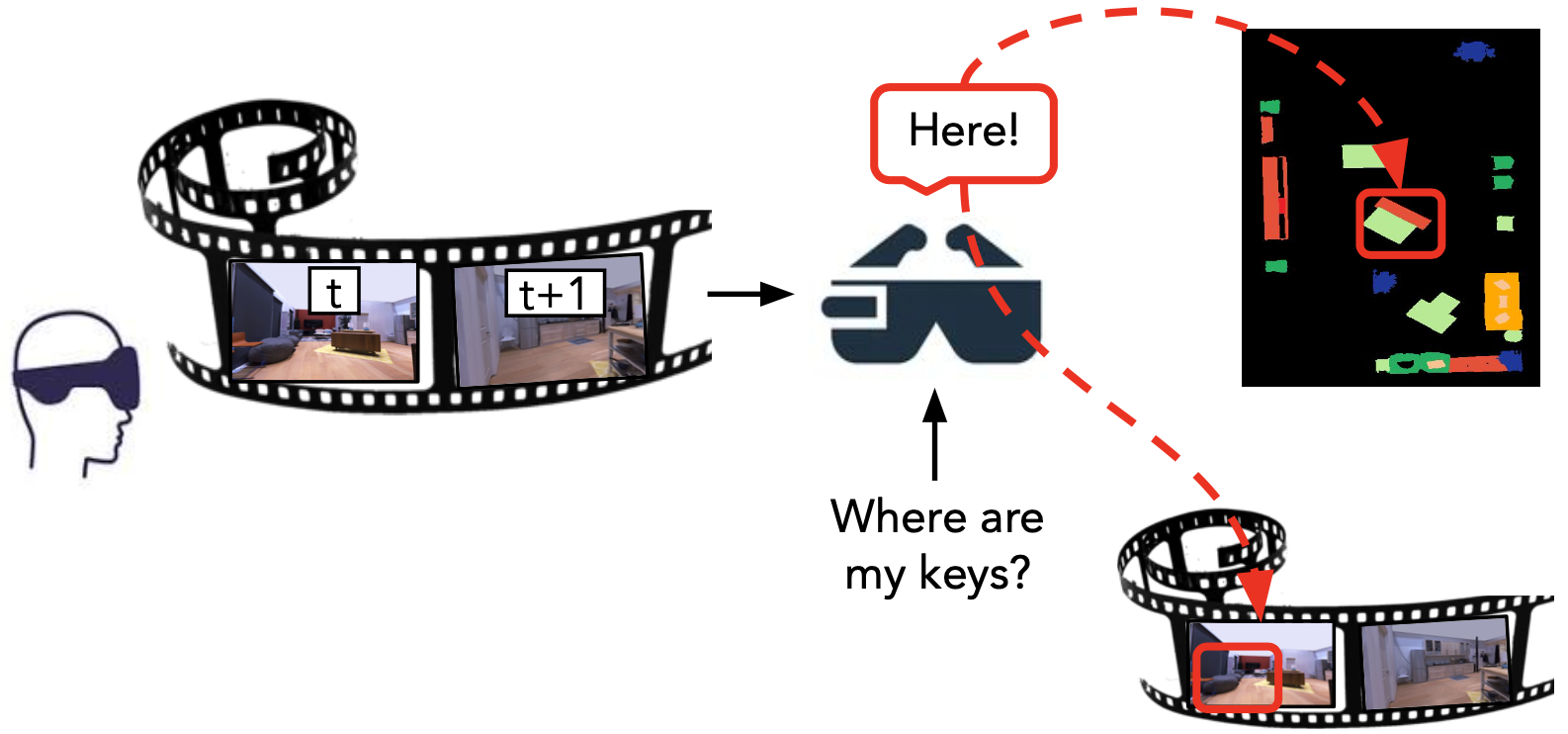

Episodic Memory Question Answering

|

|

How You Move Your Head Tells What You Do: Self-supervised Video Representation Learning with Egocentric Cameras and IMU Sensors

|

Human-AI Interaction |

|

Interactive Program Synthesis for Modeling Collaborative Physical Activities from Narrated Demonstrations

|

|

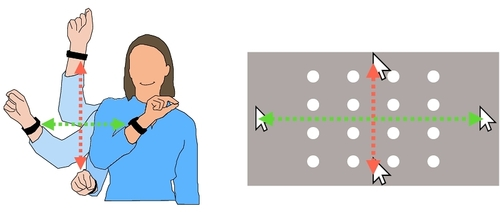

A Meta-Bayesian Approach for Rapid Online Parametric Optimization for Wrist-based Interactions

|

|

Optimizing the Timing of Intelligent Suggestion in Virtual Reality

|

|

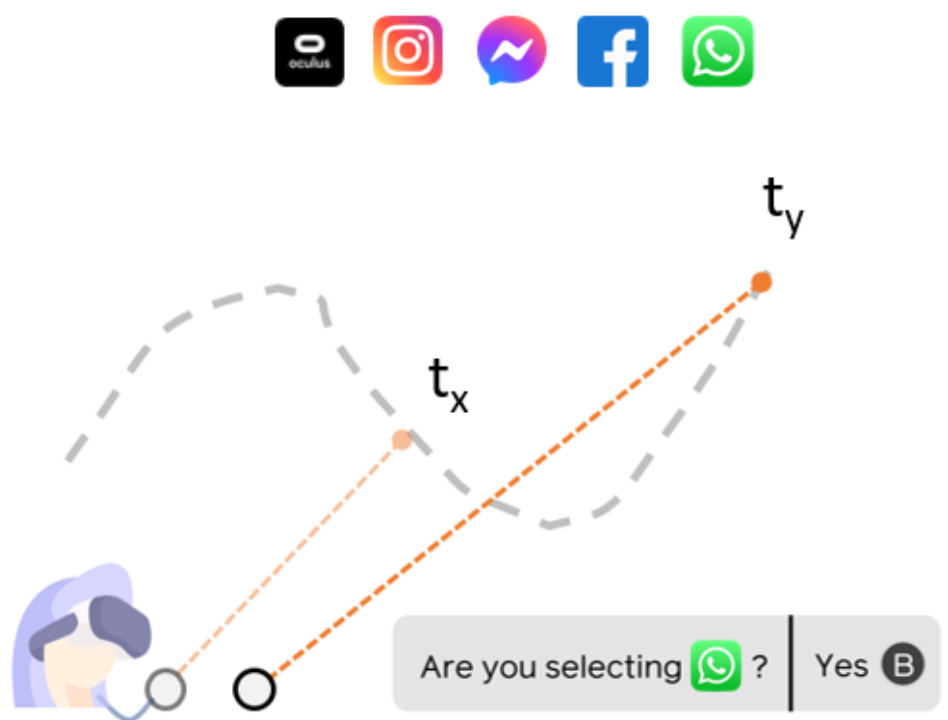

Optimal Assistance for Object-Rearrangement Tasks in Augmented Reality

|

|

Towards Inferring Cognitive State Changes from Pupil Size Variations in Real World Conditions

|

|

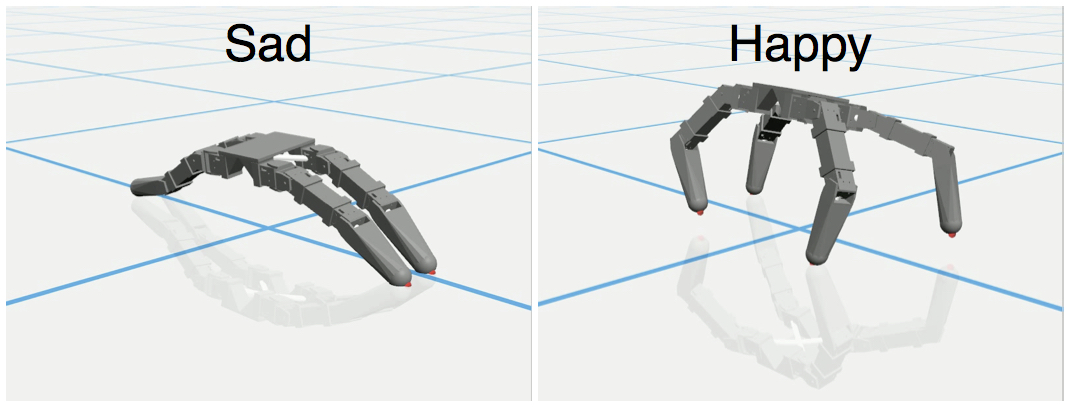

Geppetto: Enabling Semantic Design of Expressive Robot Behaviors

|

|

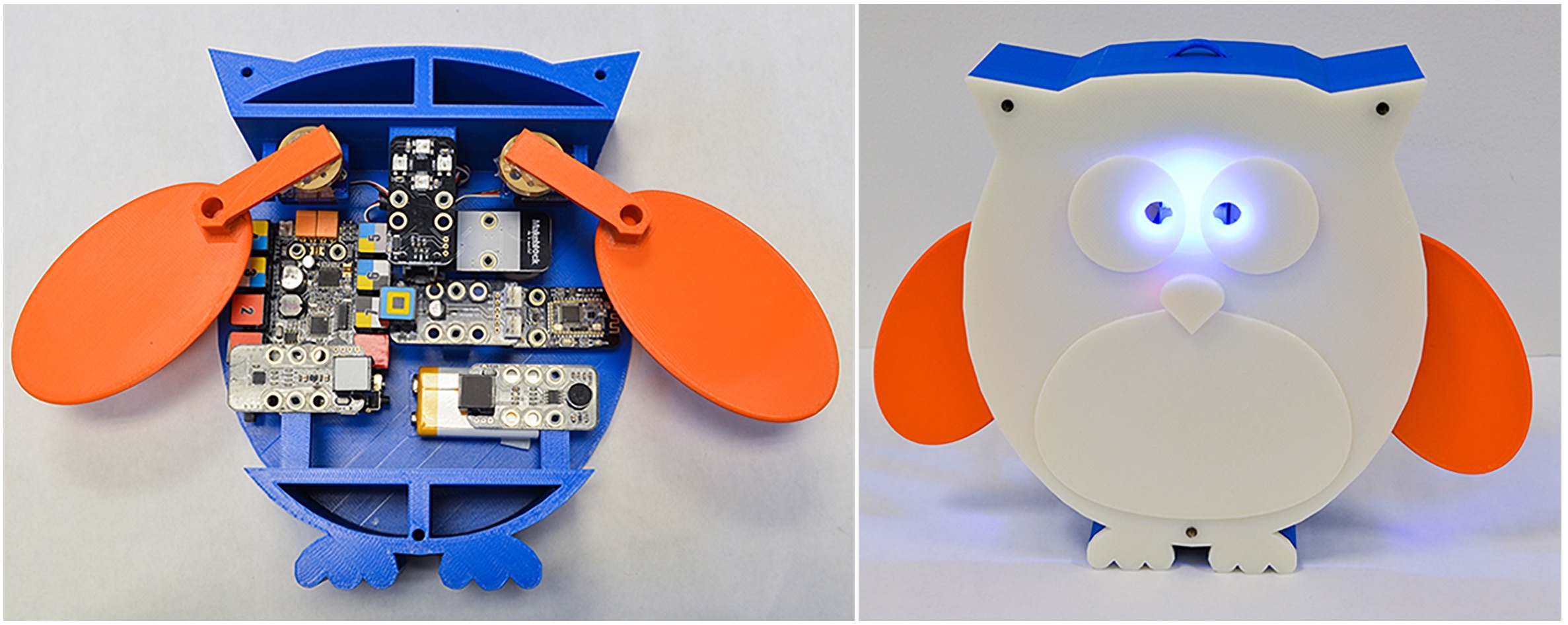

Assembly-aware Design of Printable Electromechanical Devices

|

|

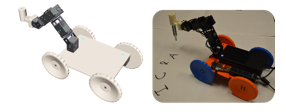

Skaterbots: Optimization-based Design and Motion Synthesis for Robotic Creatures with Legs and Wheels

|

|

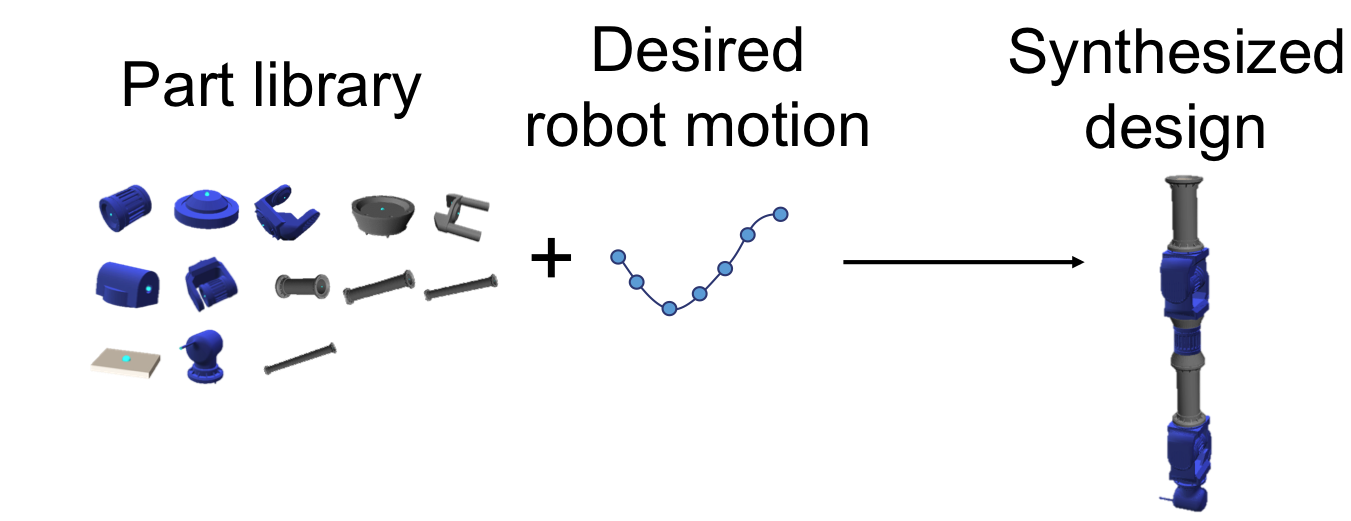

Automatic Design of Task-specific Robotic Arms

|

|

Interactive Co-Design of Form and Function for Legged Robots using the Adjoint Method

|

|

Computational Abstractions for Interactive Design of Robotic Devices

|

|

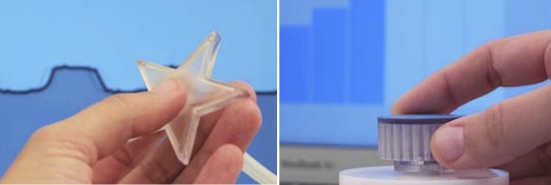

3D Printing Pneumatic Device Controls with Variable Activation Force Capabilities

Marynel Vazquez, Eric Brockmeyer, Ruta Desai, Chris Harrison and Scott E. Hudson ACM Conference on Human Factors in Computing Systems (CHI), Seoul, Korea, (2015). PDF | bib | Poster | Video

|

Human Motion Modeling, Human Motor Control, Biomechanics |

|

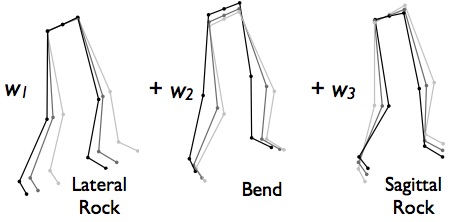

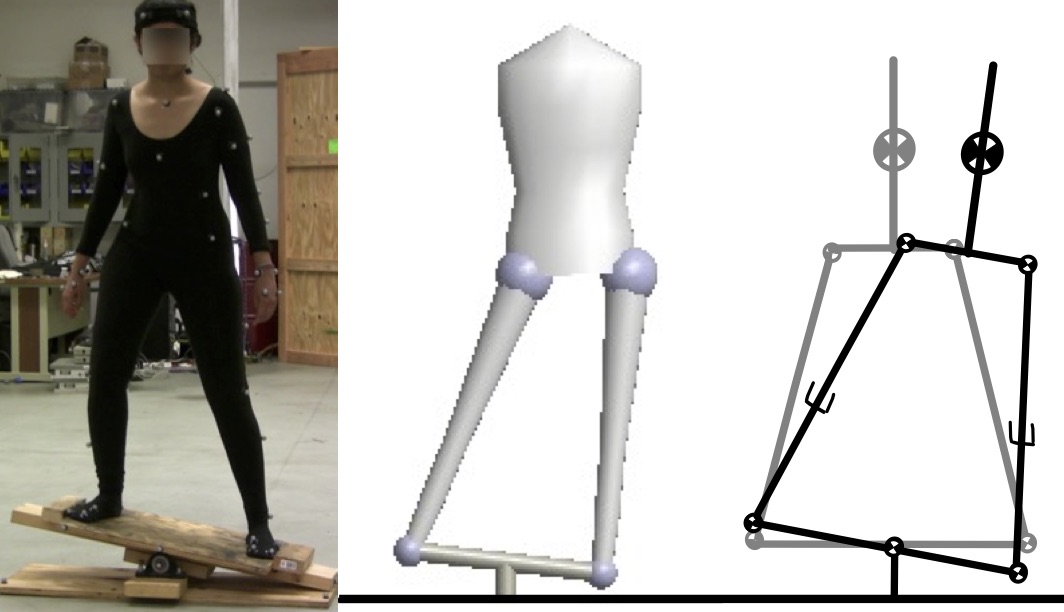

A Simple Model of Skill Acquisition in a Dynamic Balance Task

Ruta Desai and Jessica K. Hodgins Dynamic Walking, Columbus, USA (2015). PDF | bib | Poster | Video |

|

Virtual Model Control for Dynamic Lateral Balance

Ruta Desai, Hartmut Geyer, and Jessica K. Hodgins IEEE International Conference on Humanoid Robots (Humanoids), Madrid, Spain (2014). PDF | bib |

|

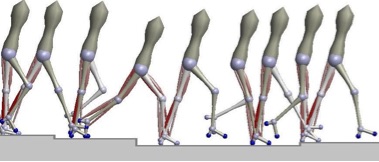

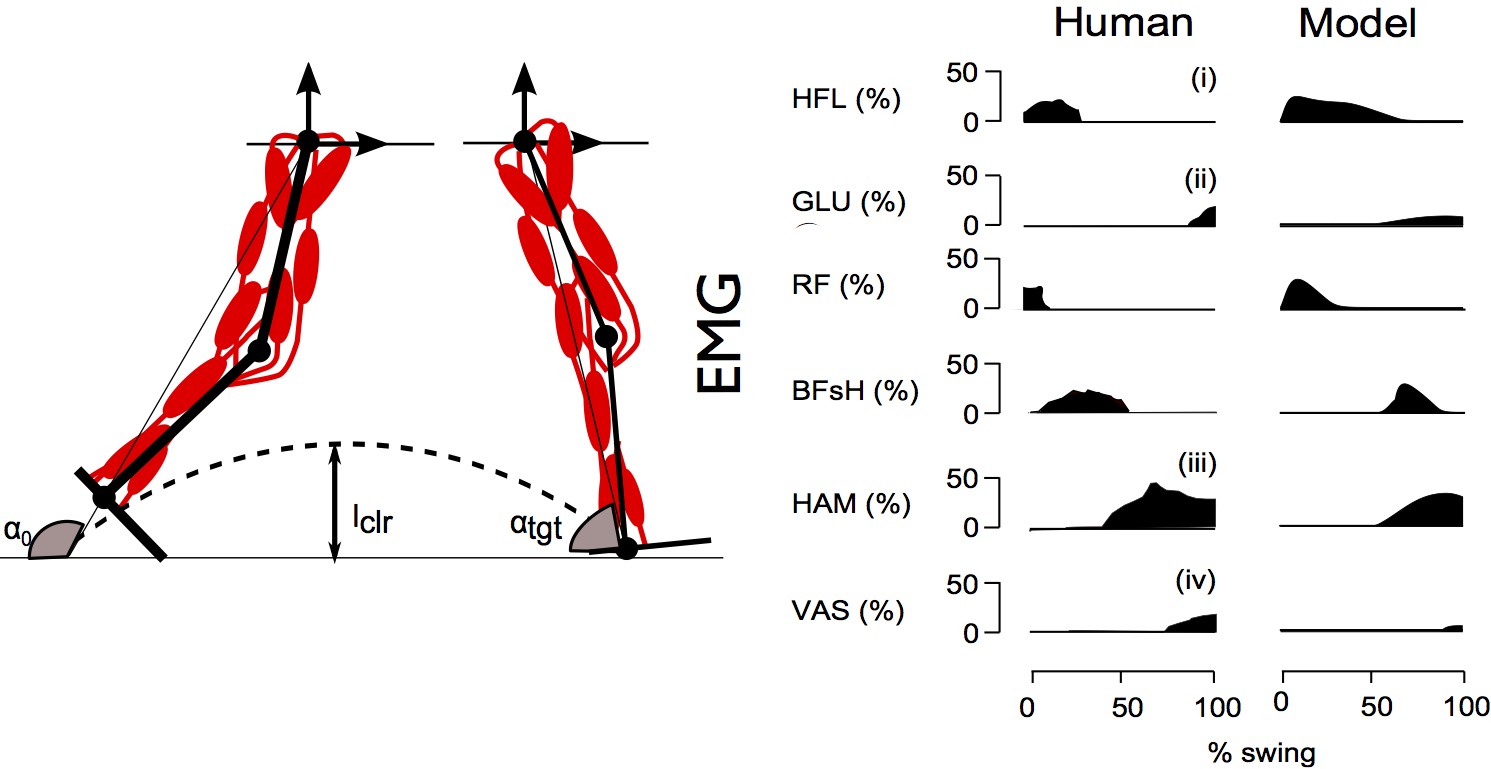

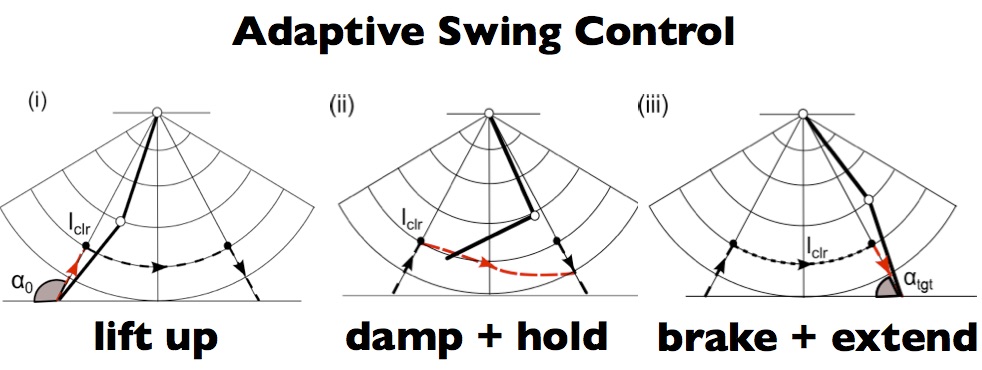

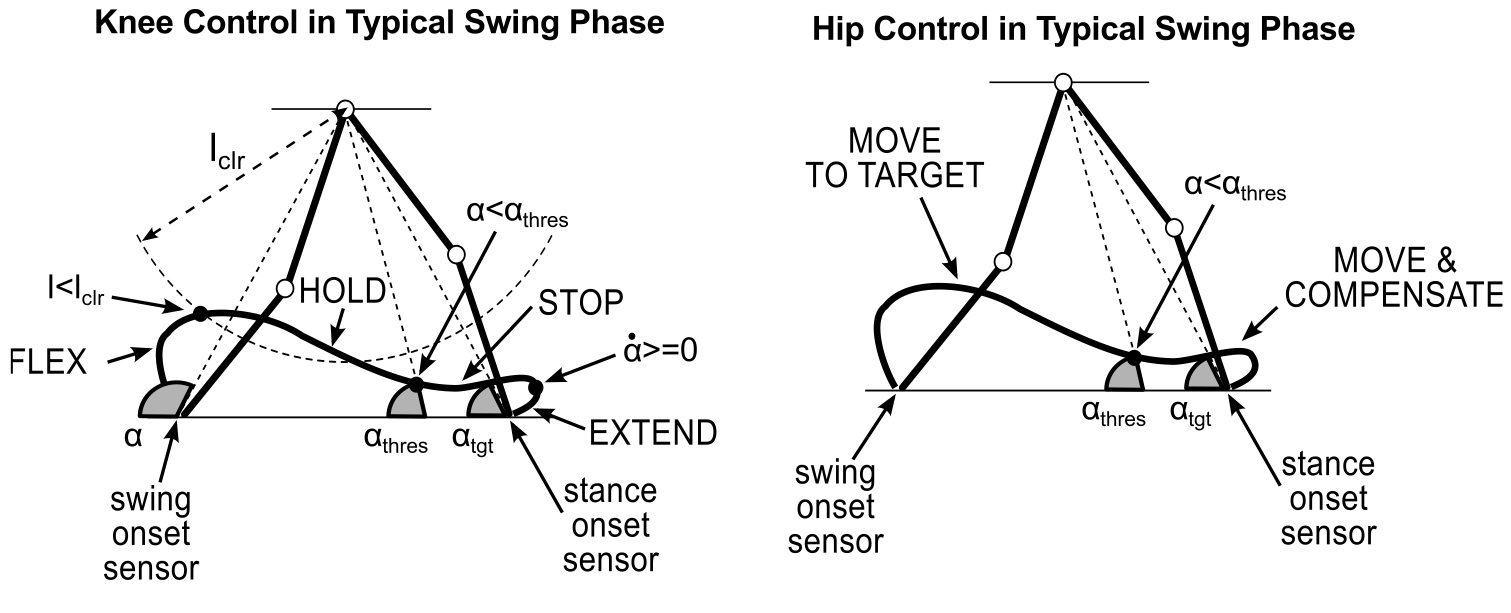

Integration of an Adaptive Swing Control into a Neuromuscular Human Walking Model

Seungmoon Song, Ruta Desai, and Hartmut Geyer IEEE Engineering in Medicine and Biology Society (EMBC), Osaka, Japan (2013). PDF | bib | Video |

|

Muscle-Reflex Control of Robust Swing Leg Placement

Ruta Desai and Hartmut Geyer IEEE International Conference on Robotics and Automation (ICRA), Karlsruhe, Germany (2013). PDF | bib |

|

Robust Swing Leg Placement under Large Disturbances

Ruta Desai and Hartmut Geyer IEEE International Conference on Robotics and Biomimetics (ROBIO), Guangzhou, China (2012). PDF | bib | Video |

Selected Patents and Preprints |

|

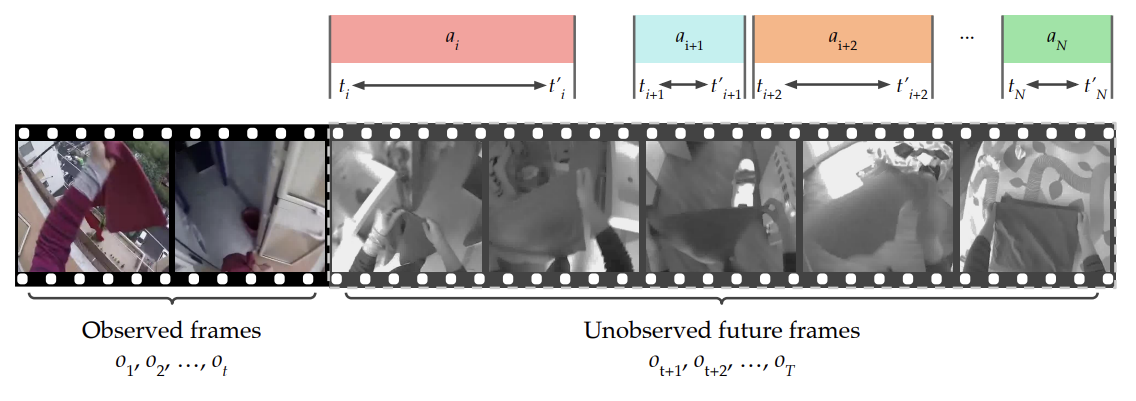

Human Action Anticipation: A Survey

|

|

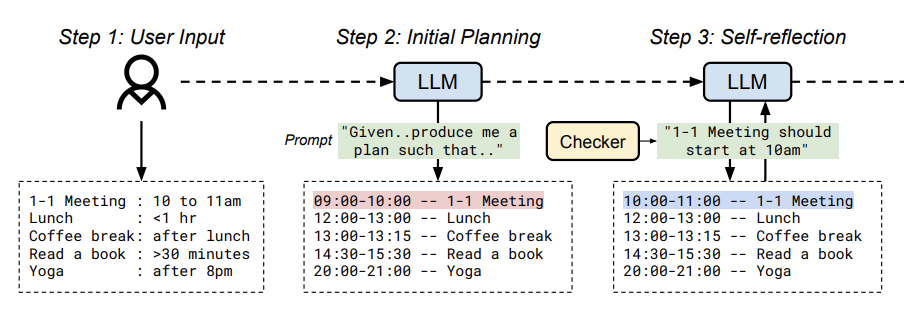

Human-Centered Planning

|

|

Optimal Assistance for Object-Rearrangement Tasks in Augmented Reality

Benjamin A. Newman, Kevin T. Carlberg, Ruta Desai, James Hillis US Patent US20220114366 A1 (2022). |

|

Generative design techniques for robot behavior

Fraser Anderson, Stelian Coros, Ruta Desai, Tovi Grossman, Justin Matejka, George Fitzmaurice US Patent 20200030988 A1 (2020). |

|

Robust swing leg controller under large disturbances

Ruta Desai and Hartmut Geyer US Patent 20150066156 A1 (2014). |

|

A Brief Overview of Human and Robot Motor Learning

Ruta Desai RI CMU Technical Report |

Academic ServiceConference Program Committee Chair and Reviewer

|

Talks and Teaching

|

OutreachWomen in TechnologyI am always looking to support and contribute towards encouraging women in science and technology. In the past:

|

Selected Press

|